(12)Scrapy执行爬行捉取

要执行蜘蛛抓取数据,在 first_scrapy 目录中运行以下命令:

scrapy crawl first

在这里,first 是创建蜘蛛时指定的蜘蛛名称。

当蜘蛛开始抓取后,可以看到如下面的输出:

D:first_scrapy>scrapy crawl first

2016-10-03 10:40:10 [scrapy] INFO: Scrapy 1.1.2 started (bot: first_scrapy)

2016-10-03 10:40:10 [scrapy] INFO: Overridden settings: {'NEWSPIDER_MODULE': 'first_scrapy.spiders', 'SPIDER_MODULES': ['first_scrapy.spiders'], 'ROBOTSTXT_OBEY': True, 'BOT_NAME': 'first_scrapy'}

2016-10-03 10:40:10 [scrapy] INFO: Enabled extensions:

['scrapy.extensions.logstats.LogStats',

'scrapy.extensions.telnet.TelnetConsole',

'scrapy.extensions.corestats.CoreStats']

2016-10-03 10:40:11 [scrapy] INFO: Enabled downloader middlewares:

['scrapy.downloadermiddlewares.robotstxt.RobotsTxtMiddleware',

'scrapy.downloadermiddlewares.httpauth.HttpAuthMiddleware',

'scrapy.downloadermiddlewares.downloadtimeout.DownloadTimeoutMiddleware',

'scrapy.downloadermiddlewares.useragent.UserAgentMiddleware',

'scrapy.downloadermiddlewares.retry.RetryMiddleware',

'scrapy.downloadermiddlewares.defaultheaders.DefaultHeadersMiddleware',

'scrapy.downloadermiddlewares.redirect.MetaRefreshMiddleware',

'scrapy.downloadermiddlewares.httpcompression.HttpCompressionMiddleware',

'scrapy.downloadermiddlewares.redirect.RedirectMiddleware',

'scrapy.downloadermiddlewares.cookies.CookiesMiddleware',

'scrapy.downloadermiddlewares.chunked.ChunkedTransferMiddleware',

'scrapy.downloadermiddlewares.stats.DownloaderStats']

2016-10-03 10:40:11 [scrapy] INFO: Enabled spider middlewares:

['scrapy.spidermiddlewares.httperror.HttpErrorMiddleware',

'scrapy.spidermiddlewares.offsite.OffsiteMiddleware',

'scrapy.spidermiddlewares.referer.RefererMiddleware',

'scrapy.spidermiddlewares.urllength.UrlLengthMiddleware',

'scrapy.spidermiddlewares.depth.DepthMiddleware']

2016-10-03 10:40:11 [scrapy] INFO: Enabled item pipelines:

[]

2016-10-03 10:40:11 [scrapy] INFO: Spider opened

2016-10-03 10:40:11 [scrapy] INFO: Crawled 0 pages (at 0 pages/min), scraped 0 items (at 0 items/min)

2016-10-03 10:40:11 [scrapy] DEBUG: Telnet console listening on 127.0.0.1:6023

2016-10-03 10:40:11 [scrapy] DEBUG: Crawled (200) <GET http://www.yiibai.com/robots.txt> (referer: None)

2016-10-03 10:40:11 [scrapy] DEBUG: Crawled (200) <GET http://www.yiibai.com/scrapy/scrapy_create_project.html> (referer: None)

2016-10-03 10:40:11 [scrapy] DEBUG: Crawled (200) <GET http://www.yiibai.com/scrapy/scrapy_environment.html> (referer: None)

Curent URL => scrapy_create_project.html

Curent URL => scrapy_environment.html

2016-10-03 10:40:12 [scrapy] INFO: Closing spider (finished)

2016-10-03 10:40:12 [scrapy] INFO: Dumping Scrapy stats:

{'downloader/request_bytes': 709,

'downloader/request_count': 3,

'downloader/request_method_count/GET': 3,

'downloader/response_bytes': 15401,

'downloader/response_count': 3,

'downloader/response_status_count/200': 3,

'finish_reason': 'finished',

'finish_time': datetime.datetime(2016, 10, 3, 2, 40, 12, 98000),

'log_count/DEBUG': 4,

'log_count/INFO': 7,

'response_received_count': 3,

'scheduler/dequeued': 2,

'scheduler/dequeued/memory': 2,

'scheduler/enqueued': 2,

'scheduler/enqueued/memory': 2,

'start_time': datetime.datetime(2016, 10, 3, 2, 40, 11, 614000)}

2016-10-03 10:40:12 [scrapy] INFO: Spider closed (finished)

D:first_scrapy>

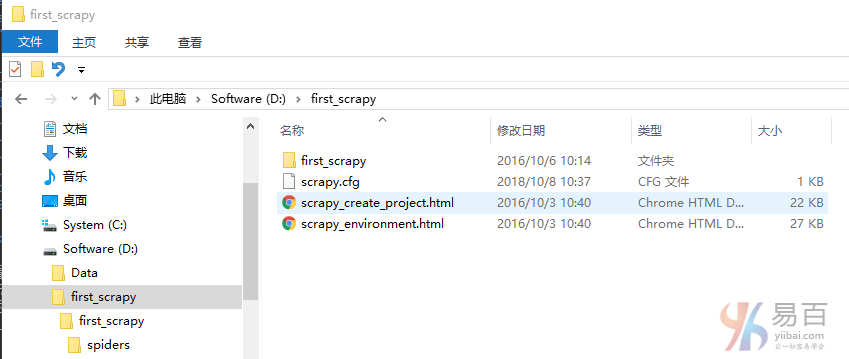

正如你在输出中看到的, 每个URL有一个日志行,其中(引用:None)表示,网址是起始网址,它没有引用。接下来,应该看到有两个名为 scrapy_environment.html和 scrapy_environment.html 的文件在 first_scrapy 这个目录中有被创建。

如下所示 –

关注右侧公众号,随时随地查看教程

Scrapy教程目录